Table of Content

- How Flashka AI Interprets and Breaks Down Study Material

- Flashka AI’s Accuracy vs Human-Created Flashcards

- Flashka AI Performance Across Different Subjects

- Flashka AI and the Hidden Work of Verifying AI Output

- Where Flashka AI Fails to Capture Complex Ideas

- How Flashka AI’s Credit System Shapes Study Behavior

- Long-Term Learning Effects of Using Flashka AI

- Final Thoughts

Over the last decade, student learning has gradually moved away from active note-making and toward algorithmic assistance. With tools like Flashka AI, learners no longer generate study material manually; they outsource extraction, summarization, and flashcard preparation to machine models.

This change has academic implications.

While algorithmic studying offers speed, it also threatens several foundational skills: interpretation, prioritization, cognitive mapping, and critical reading. Understanding Flashka AI requires examining not just what it produces, but what the student loses in the process.

Flashka AI enters a landscape where speed is prized, but depth is sometimes sacrificed without users realizing it.

How Flashka AI Interprets and Breaks Down Study Material

Flashka uses a multi-layered extraction pipeline to convert large documents into flashcards. Its core process follows these steps:

1. Structural Detection

It identifies headers, bullet points, bold text, and recurring terminology.

These elements heavily influence what Flashka decides is “important.”

2. Concept Mapping

The model groups statements based on semantic similarity, compresses related ideas, and attempts to produce a lean definition.

3. Information Prioritization

Flashka weighs frequently used terms more heavily, sometimes overvaluing repeated content while undervaluing subtle details.

4. Reduction into QA Format

Complex explanations are trimmed, examples removed, and context minimized.

5. Generation of Memory Units

Everything is reformatted into short, recall-friendly blocks.

The process is efficient, but academically, the risk is clear: context is stripped before the learner ever sees it.

Flashka AI’s Accuracy vs Human-Created Flashcards

Human-generated flashcards reflect:

- subjective understanding

- individualized phrasing

- focus on personal weaknesses

- emotionally tagged memory points

- context-based interpretation

Flashka AI removes these layers.

Its output tends to be:

- fact-dense

- oversimplified

- context-reduced

- occasionally inconsistent

- vulnerable to misinterpretatio

Based on qualitative user reports from platforms like Trustpilot, Reddit’s r/GetStudying, and Flashka’s App Store reviews, accuracy varies significantly between topics.

In essence: Flashka AI is precise with surface-level facts but struggles with context-dependent or multi-step concepts.

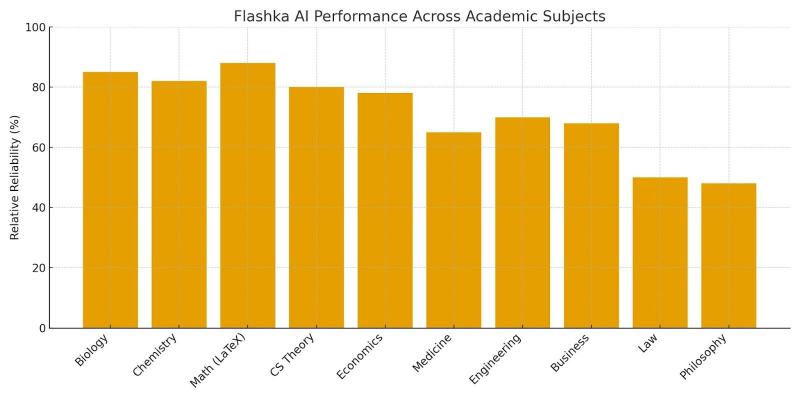

Flashka AI Performance Across Different Subjects

A subject-by-subject evaluation reveals a predictable trend:

High reliability

- Flashka performs well in domains with structured definitions:

- Genetics & biology terminology

- Chemistry reactions & formulae

- Mathematics with LaTeX-heavy notation

- CS concepts like algorithms or OS theory

- Economics with standardized definitions

Variable reliability

Subjects requiring interpretation or procedural logic show mixed outcomes:

- Mechanical engineering

- Human physiology

- Business case analysis

- Large data theory sections

Low reliability

Flashka routinely struggles in:

- Case law (missing exceptions/clauses)

- Ethics/philosophy (oversimplification)

- Literature (removal of interpretive context)

- Psychology theories requiring nuance

The deeper and more context-driven the subject, the weaker the AI’s output becomes.

Flashka AI and the Hidden Work of Verifying AI Output

Many students assume automation reduces workload, but Flashka shifts effort rather than removing it.

Students must still:

- Check definitions manually

- identify misinterpretations

- Compare the content with the original notes

- Delete incorrect cards

- Reorganize misplaced concepts

- ensure terminology consistency

In some subjects, verification can take longer than manual card creation.

The tool saves typing time, not intellectual effort, a crucial distinction.

Where Flashka AI Fails to Capture Complex Ideas

Flashka’s limitations are structural, not accidental. AI models struggle with:

- nested logic (“X applies only if Y follows Z”)

- cross-chapter linkage

- ambiguous or multi-interpretive themes

- exceptions and sub-exceptions

- context that depends on narrative flow

- causal relationships in long arguments

This means Flashka may produce technically correct but conceptually weak cards — the kind that do not help during high-stakes exams.

Does Flashka AI Improve Retention? A Cognitive Review

Retention is shaped by:

- effortful retrieval

- elaboration

- active note construction

- metacognition

- Repeated cognitive exposure

Flashka bypasses the construction phase, which is often the most important for deep learning.

Users get ready-made cards but lose the benefit of “learning by creating.”

In cognitive science terms, Flashka supports maintenance rehearsal, not elaborative rehearsal, which limits depth of understanding.

Flashka AI’s Compression Risks in Dense Academic Topics

Flashka aggressively compresses content.

Compression errors include:

- dropping conditional statements

- skipping exceptions

- merging multiple concepts into one

- incorrectly simplifying examples

- removing context needed for exam reasoning

This is particularly dangerous in:

- medical topics

- legal doctrines

- physics

- advanced finance

- statistics

Where a missing qualifier can turn a correct idea into an incorrect one.

Flashka AI Data Handling and Upload Privacy Concerns

Flashka processes user-uploaded materials, which often include:

- copyrighted textbook scans

- institutional lecture slides

- medical study notes

- personal annotations

- proprietary research documents

Key privacy concerns:

- storage duration is unclear

- anonymization practices are unspecified

- future training usage is not well-defined

- deletion guarantees are not publicly transparent

Students handling sensitive academic materials should be cautious.

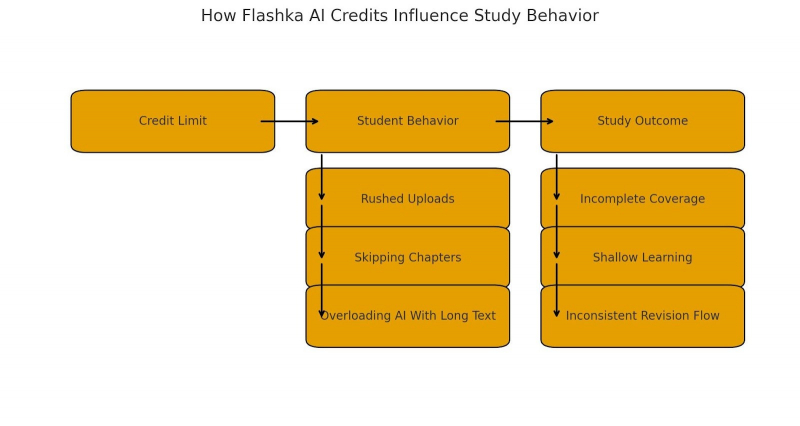

How Flashka AI’s Credit System Shapes Study Behavior

The credit model affects studying patterns:

- Students may rush through uploads to conserve credits

- Large courses become difficult to translate within limits

- Users may upload incomplete chapters

- Some rely heavily on daily free credits, disrupting consistent study flow

- Others compress text poorly to stay within quotas

- Credit-driven learning can become quantity-focused rather than quality-focused.

Error Variability in Flashka AI Output

Flashka’s output is inconsistent due to model variability:

- Same topic, different formats

- Occasional hallucinated phrasing

- Redundant facts in one deck, missing facts in another

- Inconsistency in terminology (“metabolic rate” vs “metabolic activity”)

- Structural repetition in some subjects

This inconsistency forces learners to invest extra time in cleanup.

Flashka AI vs Human Revision Patterns

Human revision tends to fail visibly; errors stand out.

AI-generated revision fails covertly, errors look polished.

Human errors

- usually obvious

- easy to detect

- based on misremembering, not misinterpretation

AI errors

- syntactically perfect

- semantically incorrect

- highly believable

- difficult to catch without deep domain knowledge

This is why Flashka works well for memorization but not for conceptual subjects.

Flashka AI Compared With Traditional Study Systems

Traditional systems rely on:

- deliberate information selection

- conceptual note-making

- strong recall pathways

- deeper engagement with text

Flashka replaces these with automation, producing:

- faster surface-level coverage

- weaker conceptual mapping

- inconsistent depth

- reduced cognitive load

For quick revision, Flashka works.

For robust understanding, traditional systems remain more reliable.

Long-Term Learning Effects of Using Flashka AI

Potential long-term academic consequences:

Positive

- rapid recall

- better spaced repetition discipline

- more exposure cycles

Negative

- shallow reasoning skills

- reduced ability to construct mental models

- weaker comprehension

- early dependency on AI summaries

- less academic resilience when facing complex questions

AI flashcards provide speed, but speed is not mastery.

When Flashka AI Works Well, And When It Doesn’t

Works well for:

- fact-heavy courses

- early-semester material review

- quick revisions before tests

- structured STEM definitions

- simple Q&A subjects

Weak performance in:

- analytical exams

- open-ended interpretation subjects

- context-heavy fields

- professional certifications needing deep reasoning

It’s a tool best used with caution and not as a standalone study method.

Flashka AI’s Overall Academic Trade-Offs

Using Flashka AI involves balancing:

- Speed vs comprehension

- Convenience vs depth

- Coverage vs critical thinking

- Automation vs self-construction

Flashka is not inherently “good” or “bad.”

It is simply a tool with clear strengths, clear limitations, and clear risks if used uncritically.

Final Thoughts

Flashka AI represents a broader trend: students outsourcing cognitive processing to automation.

While the tool accelerates content preparation, it simultaneously weakens the cognitive steps that produce deep, lasting understanding.

The tool is useful in short bursts, especially for subjects where definitions or formulas dominate. But for complex, layered disciplines, Flashka’s reductions can be academically risky.

If students rely too heavily on AI-generated flashcards, they may build knowledge structures on shallow foundations, which collapse when challenged by real analytical tasks.

Flashka AI is best treated as an assistant, not a replacement for active studying.

Summary

- Flashka AI converts text into flashcards quickly

- Accuracy varies sharply across subjects

- AI reduces cognitive engagement, which may affect long-term retention

- Flashka struggles with nuance, exceptions, and logic-heavy topics

- Verification is still required — sometimes extensively

- Data handling transparency remains limited

- The credit system can distort study habits

Flashka works best for factual memorization, not conceptual mastery

My Rating

Accuracy: 6.8 / 10

Good for definitions, inconsistent for complex topics.

Consistency: 6.2 / 10

Output varies significantly across chapters and subjects.

Cognitive Value: 5.5 / 10

Improves recall but weakens deeper processing.

Subject Coverage: 7.4 / 10

Strong in STEM and definitions, weaker in interpretation-based fields.

User Experience: 7.8 / 10

Fast, clean, mobile-friendly.

Overall Reliability: 6.5 / 10

Verdict:

Flashka AI is efficient but academically shallow.

Useful for surface-level learning, unsafe as a primary study method.

Post Comment

Be the first to post comment!