Organizations handle growing volumes of online information every day, and manual collection often slows progress. The teams need to spend extended time periods for both record duplication and accuracy verification and file organization work before they can start their actual analysis work. Automation provides an intelligent solution that decreases time spent on repetitive tasks while maintaining human decision-making authority. Organizations experience productivity improvements with error reductions when they optimize their data movement process from sources to structured systems. Professionals may focus on creating insights while automatic data collecting takes place thanks to the new method. Better operational decision support and faster report generation are the outcomes of this procedure.

The Shift from Manual to Automated Data Collection

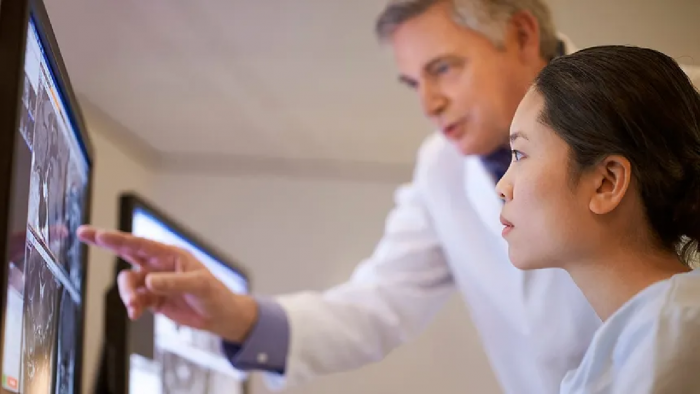

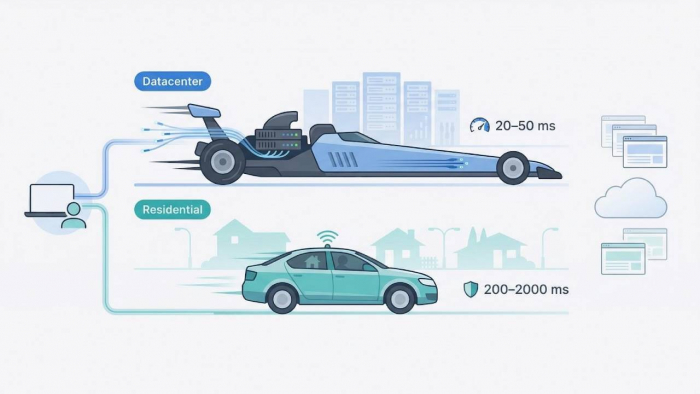

Traditional data collection depends heavily on human attention, which limits scale and consistency. Automation tools now replace repetitive steps with controlled scripts that follow predefined rules. Within this approach, a scraping browser supports smart extraction by simulating user actions while capturing structured outputs for storage and review. This method shortens task duration and improves repeatability without demanding advanced programming knowledge from every team member.

Core Capabilities of Scraping Browsers

Scraping browsers combine automation with visual control, making them practical for varied workflows. Their features support accuracy, speed, and adaptability across data tasks.

- Automated page interaction captures structured records without manual copying effort.

- Visual selectors help users define data points clearly for consistent retrieval.

- Scheduled runs ensure timely updates without constant supervision from staff.

- Export options deliver clean datasets ready for analysis or reporting tools.

Designing Efficient Workflow Automation

The essential first step for successful automation requires organizations to define their operational processes through detailed workflow documentation. The execution process requires a complete mapping of all steps that start from data source identification and end with final output delivery. The scraping tool enables users to create browsing sequences that replicate actual web surfing patterns while they extract specific information.

The established framework guarantees effective integration with current systems because it decreases the need for rework. The established framework for the organization enables employees to modify their work procedures according to changing requirements for data sources and reporting throughout different time periods.

Time and Resource Optimization Benefits

The process of automated workflows brings about time savings because it eliminates the need for workers to handle repetitive tasks that they used to complete. Staff members achieve better productivity when they spend their time interpreting information instead of gathering data.

- The decrease in manual tasks enables trained professionals to concentrate on work that requires their expertise.

- The enhanced speed of processes leads to more frequent reporting and better adaptability to new developments.

- Lower error rates enhance trust in collected information across teams.

- Scalable processes support growth without proportional staffing increases.

Ensuring Data Accuracy and Consistency

Accuracy remains central to any automated approach. Scraping browsers apply consistent rules during every run, minimizing variation caused by fatigue or oversight. Validation checks can be added to confirm completeness before storage.

Consistent outputs simplify comparison across time periods and sources. This reliability builds confidence in automated workflows and strengthens downstream analysis quality for planning and evaluation purposes.

Security and Ethical Considerations

Responsible automation respects access rules and data ownership boundaries. Scraping browsers provide controls that help users operate within accepted standards.

- Access limits prevent excessive requests that could disrupt source platforms.

- Authentication handling protects sensitive credentials during automated sessions.

- Compliance checks ensure the collected information aligns with usage policies.

- Audit logs support transparency and accountability across automated activities.

Integration with Broader Data Systems

Automation delivers greater value when connected with storage and analysis tools. Scraping browsers export structured files that align with common processing systems, reducing manual transfers and keeping information current across dashboards.

When teams need guidance on setup resources or advanced configurations, documentation portals often include a clear click here entry point that directs users to relevant support materials, simplifying adoption and ongoing management.

Future Ready Data Operations

Automation prepares organizations for rising data demands without adding complexity. Scraping browsers support adaptive workflows that evolve with changing sources and objectives. Their platform supports sustainable data management through its combination of user-friendly design and powerful control functions. The team achieves rapid data analysis results, which enhance accuracy for their operations while using processes that can grow with their future needs.

Smarter Workflow Outcomes

Automated data workflows transformation decision-making processes because they change how information serves its purpose. The teams obtain both efficiency and reliability through their decision to use automated systems for their entire work process instead of using outdated manual methods.

Scraping browsers offer a practical bridge between human intent and machine precision, delivering timely datasets that fuel analysis and planning. With thoughtful design and responsible use, organizations convert complex data tasks into streamlined operations that run smoothly and consistently.

Post Comment

Be the first to post comment!