Table of Content

- Key Takeaways for Busy Readers

- Inside Hume AI’s Product Ecosystem

- EVI 3 Explained in Depth

- Pricing, Plans, and Value for Money

- Developer Experience and Integration Options

- Use Cases Across Industries

- Ethical Considerations and Voice Cloning Policies

- Reception and Public Sentiment

- Competitive Landscape & Where Hume Fits

- Practical Implementation Strategies

- Roadmap and Future Direction

- Comparison Table: Hume AI vs. ElevenLabs

- Final Thoughts & Adoption Guidance

- FAQs

Ever wished an AI could truly hear how you feel—not just what you say? That’s the promise behind Hume AI and its revolutionary voice interface, EVI 3.

Whether you're building a chatbot, assistant, or customer-facing app, Hume’s technology adds empathy and emotional awareness to the conversation.

Key Takeaways for Busy Readers

- EVI 3 delivers near-instant responses (~300 ms), making it one of the fastest voice AI platforms.

- Custom voices can be created or cloned from short samples, with no complex training needed.

- Prosody and emotion analysis are built in, so the AI adapts not just to words but to tone and pace.

- Flexible pricing tiers cover everything from free trials to enterprise-grade usage with GDPR and HIPAA compliance.

- Legacy EVI 1 and EVI 2 models are being retired by August 2025, so migration to EVI 3 is essential.

Inside Hume AI’s Product Ecosystem

EVI 3 – Empathic Voice in Real Time

With EVI 3, Hume has fused speech recognition, reasoning, and voice synthesis into a single pipeline. Conversations flow smoothly, with no awkward delays.

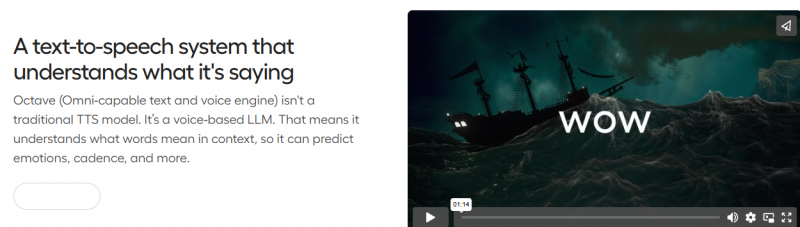

Octave TTS – Emotionally Intelligent Text-to-Speech

The Octave TTS engine lets developers prompt for specific speaking styles—anything from a warm narrator to a sarcastic gamer—while keeping latency ultra-low.

Expression Measurement APIs

Through its expression measurement tools, Hume can detect hundreds of emotional signals across text, voice, and even facial data, enabling richer context in conversations.

Conversational Voice Toolkit & Creator Studio

For faster development, the toolkit and studio give you pre-built voices and drag-and-drop customizations that make experimentation simple.

EVI 3 Explained in Depth

The genius of EVI 3 is in how it handles the entire conversation loop—automatic speech recognition, language reasoning, and expressive speech output. Latency stays around 300 ms, meaning it feels natural to interrupt or talk over without breaking the flow.

Developers can switch between external LLMs like Claude, OpenAI, or Gemini mid-conversation, while tapping into Hume’s database of 100K+ voices. And if none fit? Just clone one with a few seconds of audio.

Pricing, Plans, and Value for Money

Hume’s pricing model makes scaling affordable:

- Free and starter plans cover light usage.

- Creator, Pro, and Scale tiers unlock higher concurrency and custom cloning.

- Compliance comes standard, including SOC 2, GDPR, and HIPAA.

- High-volume users can expect voice usage at pennies per minute.

Expression measurement is billed separately—per minute, per word, or per image—so you only pay for what you use.

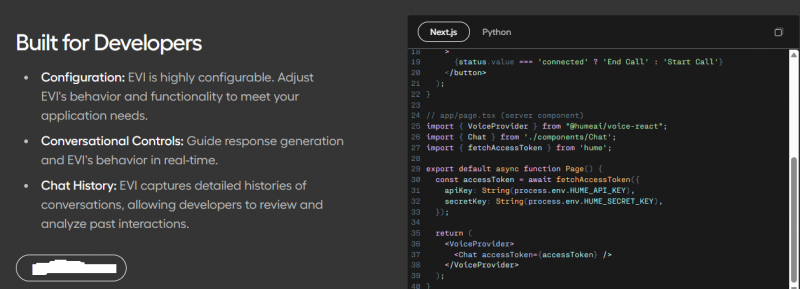

Developer Experience and Integration Options

Getting started is straightforward thanks to Hume’s developer docs. SDKs exist for TypeScript, Python, and Next.js, and a live playground makes testing quick.

Integrations are equally smooth: Vercel offers a starter template, while Twilio and LiveKit enable real-time voice connections. APIs are available both as WebSocket streams and as REST endpoints for history/configs.

Use Cases Across Industries

- Healthcare: Empathic triage agents and supportive check-ins are already being tested by innovators listed on Anthropic’s customer page.

- Customer Service: Tone-aware de-escalation agents improve CSAT and reduce frustration.

- Education & Training: Adaptive tutors engage students with dynamic tone shifts.

- Gaming & VR: Emotionally expressive NPCs heighten immersion.

- Robotics: Physical devices can now “speak back” with emotional resonance.

Ethical Considerations and Voice Cloning Policies

Hume has been vocal about safety through the Hume Initiative, which promotes ethical standards for emotional AI. Still, experts caution that simulated empathy can mislead users if not disclosed.

Voice cloning, a key feature of EVI 3, raises obvious risks of misuse. Hume enforces strict consent rules and monitors cloning activity to prevent abuse.

Reception and Public Sentiment

Tech outlets like Tom’s Guide praised the realism of Hume’s new voice app while noting it’s “not quite there yet.” On Reddit, early adopters highlighted smooth latency and natural flow, though some questioned whether empathy can truly be “real.”

On Product Hunt, the launch drew strong engagement, with users intrigued by its emotional expressivity compared to ElevenLabs or PlayHT.

Competitive Landscape & Where Hume Fits

Compared to ElevenLabs or PlayHT, Hume focuses less on raw voice cloning and more on real-time empathic conversation. Companionship models like Replika can simulate bonds but lack multi-modal emotion detection. Hume’s unique edge lies in combining latency, customization, and prosody measurement in one package.

Practical Implementation Strategies

Building an MVP can be done in under a week using the Vercel starter kit, EVI configs, and a Twilio connection. From there, scaling into production requires monitoring emotional accuracy, ensuring consent compliance, and setting KPIs like latency and interruption success.

Roadmap and Future Direction

EVI 1 and EVI 2 will be deprecated by August 30, 2025, so developers should plan migration now. Looking forward, Hume has signaled more language support, richer persona design, and a consumer-facing iOS app for conversational voices.

Comparison Table: Hume AI vs. ElevenLabs

| Feature | Hume AI (EVI 3 / Octave TTS) | ElevenLabs |

| Expressivity | Uses prosody and narrative cues to adapt tone dynamically. | Realistic delivery but less nuanced emotion. |

| Customization | Natural language prompts for styles; quick voice cloning. | Style controls via settings, less prompt-based. |

| Latency | ~300 ms conversational response time. | ~120–300 ms depending on model complexity. |

| Emotional Nuance | Strong emphasis on emotional shifts in real time. | Expressive but relatively static delivery. |

| Voice Cloning / Custom | Clone voices with ~30 seconds of audio; 100K+ persona options. | Voice cloning from samples; large voice library. |

| Ethical & Safety Controls | Built-in safeguards, consent checks, misuse monitoring. | Detection APIs available, evolving safeguards. |

Final Thoughts & Adoption Guidance

Hume AI isn’t just another TTS system—it’s a full empathic voice platform. If you need real-time, expressive AI voices, adopt it now. If you’re primarily experimenting, pilot with the free tier before scaling. And if your use case is purely about static voice cloning, simpler tools might suffice.

FAQs

What’s included in Hume’s pricing?

Each plan comes with character quotas, EVI minutes, and cloning limits

Does it support multiple languages?

Yes, but coverage varies

How fast is it?

Conversations average ~300 ms latency, near human speed.

Is it private?

Hume complies with SOC 2, GDPR, and HIPAA, ensuring data safety.

Can I use my own LLM?

Yes—EVI integrates with Claude, OpenAI, Gemini, and others.

What about voice cloning safety?

Cloning is possible from short samples, but Hume enforces consent and oversight.

Post Comment

Be the first to post comment!